The Agent Stack Is Solidifying - and Founders Are About to Feel It

Why OpenAI, Anthropic, and Block Are Setting the Rules for Agentic AI

Agentic AI is moving out of the demonstration booth and into real products. Founders are moving beyond testing , putting them into workflows that touch users, data, and money.

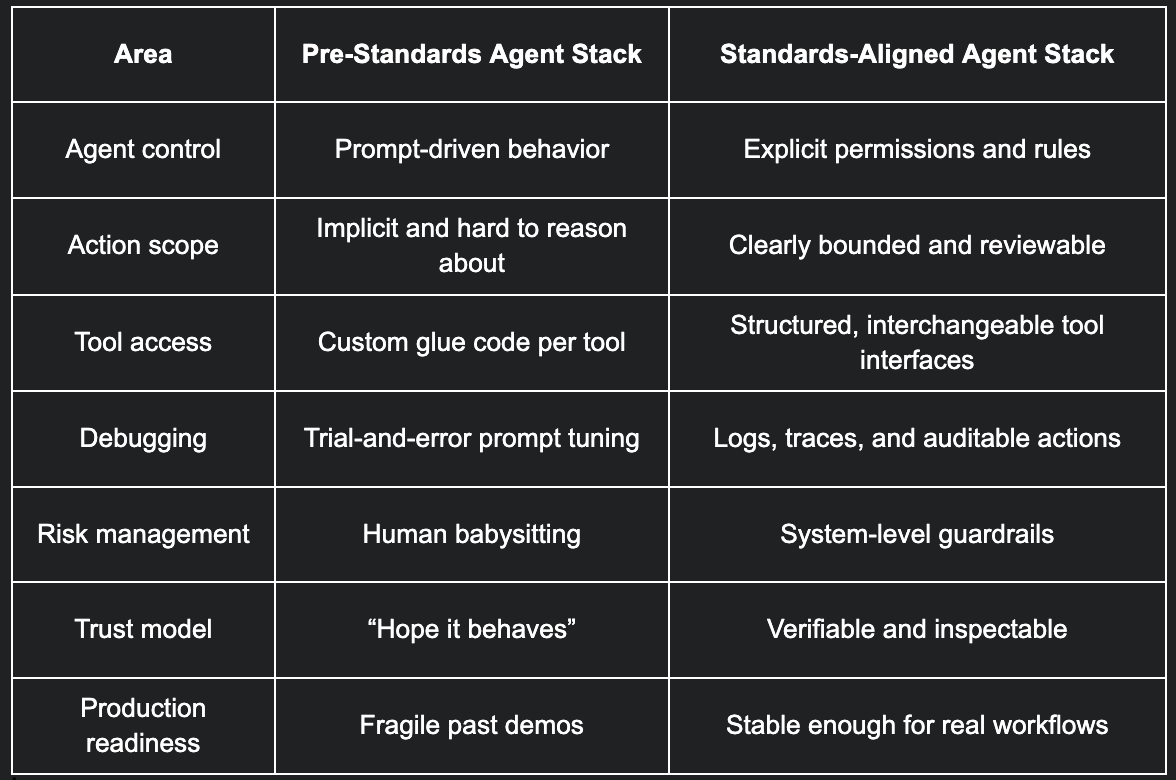

Most teams have put together custom stacks held together by prompts, glue code, and best guesses. It works at first, but it often proves fragile. Small changes break behavior, and debugging quickly becomes painful.

Normally, standards emerge after issues come to light, such as recurring outages and trust issues. And particularly after people lose money. That’s why this moment is worth noticing.

The fact that standards are emerging now is an early signal that the agentic AI market is maturing. The shift from experiments to systems has already started.

What OpenAI, Anthropic, and Block are actually doing

OpenAI, Anthropic, and Block have come together, under the Linux Foundation, to define open standards for how AI agents operate across systems.

They’re not attempting to make models smarter. They aim to make them more predictable. This involves standardizing how they take actions and call tools, even how they interact with other software, without breaking things.

Interoperability is the real goal. An agent built on one platform should be able to work across different tools and environments without having to be rewritten from scratch each time.

Block’s involvement is an important signal. It points toward agents that can handle payments and operate in real-world workflows where mistakes matter.

The real problem this is trying to solve

AI agents are already fairly sophisticated, but they’re being asked to operate without clear boundaries.

Right now, users control agent behavior through prompts rather than system design. These prompts end up standing in for permissions, rules, and safeguards. All of which works fine until it doesn’t. Once something goes wrong, it’s hard to tell why or where the failure started.

There’s also no shared way to define what an agent is allowed to do. Like what actions it can take, what data it can touch, and who or what it represents. Every team invents its own rules from scratch, making it hard to establish trust.

Once agents start touching real users or real money, these gaps become apparent quickly. Failures become expensive and difficult to unwind. That’s the problem these companies are trying to solve before it turns into a bigger mess. Establishing standards is the first step.

Why founders should care (even if they’re early)

Standards tend to quietly define what becomes “normal” in a market. Once that happens, anything built outside those patterns feels awkward or risky.

Founders who address these issues early avoid having to rip out core infrastructure later. Rewriting agent logic or tooling after customers depend on it is painful and slow.

There’s also a hiring angle. When architectures start to converge, it’s easier to bring in engineers who already understand the mental model. You spend less time explaining bespoke systems and more time building.

Plus, when it comes time to sell to larger customers, standards create trust. Enterprise buyers don’t want clever one-off designs. They want systems that look familiar and safe. Aligning early makes those conversations much easier.

What will likely get standardized first

The first items to get standardized won’t be flashy. They’ll be the boring parts that make agents safe to run in production.

Agent identity is one of them. Clear ways to define what an agent is, who it represents, and how it authenticates across systems. Without that, everything else is guesswork.

Permissioning comes next. What actions an agent is allowed to take, under what conditions, and with what limits. Scoped actions matter a lot once agents start moving money, sending messages, or touching customer data.

Tool calling is another big one. Not just which tools an agent can use, but how calls are structured, executed, and verified. This is where reliability usually breaks today.

Finally, logging and traceability. Being able to see what an agent did, why it did it, and what happened as a result. Audit trails aren’t optional once agents operate in real workflows. They’re what make agents trustworthy.

Where blockchain quietly fits into this

Blockchain is quietly entering the picture. And there are several good reasons why.

On-chain logs can act as neutral audit layers. When an agent takes an action, especially one involving value or user impact, having an immutable record matters. The focus here is accountability.

Stablecoins start to look like natural payment rails for agents. They’re programmable, global, and easy to integrate. If an agent needs to hold a balance or settle transactions, stablecoins solve that cleanly without complex banking logic.

Smart contracts can also serve as permission boundaries. Instead of trusting an agent to behave, you encode what it’s allowed to do. Limits, conditions, and safeguards live in code, not prompts.

The infrastructure here only becomes evident once agents move from experiments to systems that actually matter.

What founders should build around vs. avoid

Founders don’t need to predict the final standard to make good decisions now. The goal is to design systems that stay stable as the agent stack matures.

Design for durability

Clear action boundaries: Define exactly what an agent can and cannot do. Ambiguity turns into production risk fast.

Modular tool interfaces: Structure agents so tools can be swapped without rewriting core logic. This keeps your stack flexible as vendors change.

Observable behavior: Make agent actions visible and traceable. If you can’t see what it did and why, you can’t trust it.

There are also a few patterns that look productive early but create drag later. These choices usually feel faster at first and become painful at scale.

Patterns that don’t age well

Prompts as control logic: Prompts are not guardrails, so using them to enforce rules breaks down under real-world pressure.

Vendor-locked workflows: Agent systems that work only on one platform are complex to scale and expensive to unwind.

Unchecked autonomy: More freedom doesn’t mean better results. Most failures come from agents doing more than the system can safely support.

These choices won’t make your agents flashier, but they’ll keep your system standing when things get real.

How this changes go-to-market and trust

The emergence of agent standards will change how founders go to market and earn trust. It will also shape how agents are reviewed, sold, and adopted by serious customers.

Auditable agent behavior makes security reviews easier. When founders can clearly explain what an agent does, what it’s allowed to do, and how it’s monitored, enterprise reviews move much faster.

The narrative also improves for founders evaluating agentic tools. Instead of vague promises about “AI magic,” agents show up as defined systems with clear boundaries. Founders can understand what an agent will do, what it won’t, and where the risk lives.

This clarity makes adoption easier. It’s much simpler to trust and deploy an agent when it looks like a product, not an experiment. When permissions, logging, and controls are designed in from the start, compliance becomes something founders can plan for rather than react to.

The timing advantage for founders

Timing matters here. Moving too early locks you into patterns that won’t survive. Waiting too long makes changes expensive and disruptive.

Right now is the window to align how agents fit into your architecture without having to unwind production systems later. You don’t need final standards to make better choices. You need to avoid designs that fight where things are clearly heading.

Final takeaway

With the focus on standards, the agentic AI industry is becoming something founders can build on without betting the company. Designing with those realities in mind now means moving faster later, with fewer rewrites and fewer surprises.